TL;DR: I spent an afternoon interrogating an AI agent about why my media server’s subtitle backlog wasn’t clearing. Turns out it wasn’t one thing – it was four. And I only found all four because I kept pushing back on explanations that didn’t fully hold up.

I run Bazarr on a Synology NAS. If you don’t know Bazarr, it’s an open-source tool that automatically downloads subtitles for your TV shows and movies. It’s genuinely excellent – the kind of “set it and forget it” software that mostly just works.

Mostly.

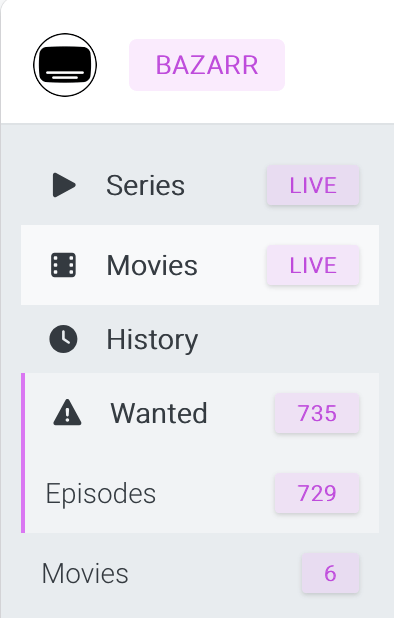

For months I had hundreds of accumulated episodes sitting in the “Wanted” list – episodes Bazarr knew existed, knew needed subtitles, and apparently couldn’t or wouldn’t do anything about. I’d subscribed to an OpenSubtitles.com VIP account (1,000 downloads per day instead of 20). I’d fixed some bugs in the codebase. I’d run “Search All” repeatedly. Nothing moved.

So I sat down with Claude Code and started asking questions.

What followed was one of the more instructive afternoons I’ve had working with an AI agent – not because the agent was brilliant, but because it wasn’t, and I kept noticing.

False lead #1: “729 episodes probably have no available subtitles”

Early in the investigation, after we’d established that Bazarr’s adaptive searching was throttling the bulk search (every single wanted episode had a failedAttempts timestamp, so Search All was skipping everything instantly), Claude offered this:

“For many: genuinely no results (older/obscure shows, score threshold, whatever).”

I pushed back. I’d gone to OpenSubtitles.com directly and checked Duckman – a 1994 animated show, not exactly mainstream – and found subtitles with thousands of downloads. The agent backed off: “You’re right. I was hedge-talking.”

(I appreciated the honesty. But I’d had to earn it.)

False lead #2: “The quota issue stamped all 729 episodes as failed”

The theory was that one particular movie had been eating up my 20-downloads-per-day free quota in an infinite retry loop, leaving nothing for the backlog. When that movie finally got fixed and I upgraded to VIP, the damage was done – 729 episodes had been marked as “failed attempts” and were sitting in an adaptive search holding pen.

Plausible story. But when I pushed on the mechanism – how exactly does hitting the download quota cause 729 episodes to all get stamped as failures? – the answer got more complicated. Claude had overstated it. Hitting DownloadLimitExceeded breaks the search loop after the current episode, not retroactively stamps everything that follows. The 729 stamps had to come from something else.

The more likely explanation: one bulk search run, probably during a period when my provider configuration was broken or incomplete, where Bazarr searched all 729 episodes, found nothing (for config reasons, not because subs don’t exist), and dutifully stamped every one of them.

The real design bug (and why I pushed hard on this)

Here’s where it got interesting. In the Bazarr codebase, failedAttempts is written to the database before generate_subtitles is called. Before the provider is contacted. Before anything is found or not found.

The consequence: if a search runs, a subtitle is found, and then the download fails – due to quota exhaustion, a network error, a 410 response from the provider – the episode gets stamped as a “failed attempt.” Adaptive searching then throttles it for weeks, even though the subtitle was right there.

To me, that’s a meaningful design gap. The stamp should only be written when the search actually runs and finds nothing. Download failures are provider-side problems, not signals that subtitles don’t exist.

I asked Claude directly: “Isn’t that bad logic? Shouldn’t we try again next run, not wait 1-3 weeks?”

The answer, eventually: “Yes. You’re absolutely right. This is a genuine design bug, not a corner case.”

We filed a PR. (morpheus65535/bazarr#3276, if you’re curious. The fix moves the stamp to after the search completes, and only writes it when providers were available but genuinely returned nothing.)

Verifying the damage

Before applying any fix, I wanted to confirm what we were actually dealing with. A quick sqlite3 query on the Bazarr database on my Synology:

SELECT COUNT(CASE WHEN failedAttempts IS NOT NULL THEN 1 END) AS stamped, COUNT(CASE WHEN failedAttempts IS NULL THEN 1 END) AS cleanFROM table_episodesWHERE missing_subtitles != '[]' AND missing_subtitles IS NOT NULL;

Result: 729 | 0. Every single wanted episode was stamped. None were clean.

The fix:

UPDATE table_episodesSET failedAttempts = NULLWHERE missing_subtitles != '[]'AND missing_subtitles IS NOT NULL;

After that, “Search All” ran for real – taking minutes instead of completing in seconds. Progress. But still no downloads.

The actual fix that finally cleared the backlog

Quota: 1 of 1,000 used. Providers: not throttled. Configuration health check: clean. And yet nothing downloading.

We dug into the OpenSubtitles.com provider config. “Use Hash” was on.

When Use Hash is enabled, Bazarr computes a hash of the video file and sends it to the provider looking for an exact file match. If no subtitle has been uploaded for that exact release, the search returns nothing – even if perfectly good subtitles exist for the episode by name, season, and episode number.

For good files, hash matching works great. For a 1994 animated series about a sentient duck, the missing hash isn’t quite the surprise you’d think.

Turn off Use Hash. Search All. Watch the queue drain.

What this was really about

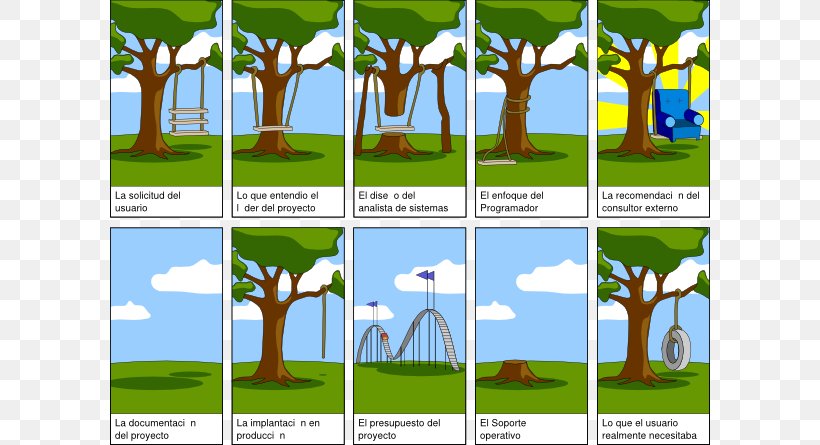

I’m a PM. A technical one, but a PM. My job is not to write the code – it’s to ask the right questions until I understand whether the system is actually behaving correctly, or whether someone (or something) is telling me a story that’s plausible but incomplete.

Claude gave me five or six explanations today that were each partially right and meaningfully wrong. Not through any bad faith – just through the same pattern I see in engineers who are smart and moving fast: the first explanation that fits the visible evidence gets offered, and if the person asking doesn’t push, that’s where it ends.

I kept pushing. Not combatively – I apologised once for pushing too hard on a point that turned out to be wrong – but persistently. Show me the code. Walk me through the mechanism. What does the stamp actually record? Does this explain all 729, or just some?

To me, that’s the job. Not “accept the answer that sounds right” – but “accept the answer that accounts for all the evidence.”

The backlog is draining now. Four things needed fixing. I found all four.

We also shipped two code fixes to the upstream Bazarr project along the way. morpheus65535 has been a gracious maintainer – accepting PRs without fuss from an unknown contributor who showed up in his GitHub with opinions about his subtitle retry logic. I assume he has opinions of his own. I’d love to know them.